The AI Promise: What is it actually promising?

Everyone's talking about the AI promise but nobody agrees on what it is. Where AI is delivering value, where it's falling short, and why the real promise is about amplifying people - not replacing them.

A few weeks ago, Karina reached out to pick my brain about what she called "the AI promise." In her organisational design work she’s seeing a rush to adopt AI driven by big efficiency narratives, but her real question is: as organisations move quickly, how are they considering the human side - how people work, decide, and create value alongside these tools?

That conversation turned into a much bigger discussion than either of us expected. This article is my attempt to unpack what we talked about. I don’t have the answers (I’m not sure anyone does right now) but the questions are worth asking out loud.

The promise is everywhere. The definition is nowhere.

Everyone's talking about "the AI promise" but nobody agrees on what it actually is.

For private equity firms and boards, it's massive cost savings and headcount reduction. For vendors, it's productivity gains and competitive advantage. For employees, it's either an existential threat or a magic wand, depending on who's talking.

The grandiose version is everywhere: big companies announcing sweeping layoffs, boards mandating AI adoption with expectations of massive savings. But the day-to-day reality? It's not that big. Not yet.

The problem starts with the framing. If you define the AI promise as "this technology will revolutionise everything and save you 30% of your costs," you're setting yourself up for disappointment. That's less of a promise and more of a sales pitch.

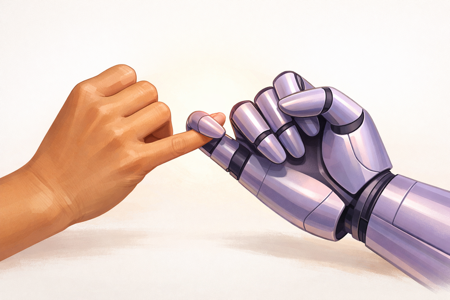

The framing I keep coming back to is something Daniel Miessler articulates well in his Personal AI Infrastructure work: AI as a way to amplify the capabilities of people. Not replace them. Not automate them out of existence. Amplify what they're already good at.

That's the AI promise I believe in.

Where it's actually working

Here's what I've seen in real teams: the biggest wins come from small, almost mundane applications that make people more effective in their existing roles.

Think about someone running a client onboarding call. They're following a script to cover everything important. Running a demo on screen. Trying to take notes of things to follow up afterwards. That's a lot for one person in one meeting.

Give them a tool that records and transcribes the call, produces meeting notes and action items. That's a huge relief. It won't show up as a 25% revenue increase on a board slide. But it makes that person clearly more effective. Apply that across your call centre, your support teams, your project managers: a lot of small initiatives, each making people a bit better at what they do. That's where the real impact comes from.

The same pattern plays out in development teams, with an important caveat. James Harvey introduced me to the concept of a "glass wall." You start using AI dev tools, you're running fast, everything feels great, and then you smash into an invisible barrier. You've pushed things to a certain point but can't get further, and now you're in a worse position than if you'd done it yourself from scratch.

That wall is still there, but further away. As people learn to work with AI tools, understanding where to trust the output and where to step in, they get far more from them. The wall doesn't disappear; you learn where it is and how to work around it. There's an interesting concept of "expert generalists" emerging. People who may not be deep specialists in every area, but who know enough to guide AI well and spot when it's going off the rails. That's the force multiplier at work.

At the same time, I'm seeing teams use generative AI to create user stories in a structured format. They pull from existing domain knowledge, wikis, design specs, previous stories, and generate solid product requirements, technical considerations and acceptance criteria. The output isn't 100%, but it's close enough that what took days or hours now takes hours or minutes. An order of magnitude faster. That's amplification again: AI handles the structured, repetitive work so people can focus on the thinking.

The pattern holds: AI works best when it's supplementing people, not trying to replace the whole job.

Just another tool (until it isn’t)

Karina and I both lived through Web 1.0. We’ve seen tools like email change how we work. So is AI just another tech transformation? Yes, with a caveat.

A former colleague, Sarah Schlobohm, gave an example that’s stuck with me: mobile phones. When they first came out, they were a new tool to do the same work. Instead of taking a call on the phone on your desk, you could take a call in your car, in your yard, wherever. But you were still just taking a call.

These days, if someone calls Sarah on her phone, she’s horrified (I believe she used the phrase "What the actual..."). A phone is no longer a phone. It’s almost anything and everything other than a phone.

That’s where AI is headed. Right now, we’re focused on how AI makes the things we already do better. But we’re only on the cusp of what it will allow us to do that we haven’t imagined yet, and it’s hard to see that clearly from where we are.

Karina works with organisations to shape structures and ways of working that deliver services sustainably into the future, and she sees how challenging that is already. If designing future organisations and processes is hard, you might expect planning for AI a few years ahead would be a cinch. It isn’t. The pace of change makes it difficult to see what’s coming next, let alone design for it with confidence.

Exponential growth creeps up on you. For a long time it looks like nothing much is happening, then it suddenly matters a lot. Moore’s Law gave us a doubling in computing power roughly every two years, and that transformed the world. Recent research from METR suggests AI agent capabilities are doubling every seven months, and accelerating. That’s not a gentle curve. The gap between "this is a bit underwhelming" and "this changes everything" is shorter than most businesses’ planning cycles.

When the tool starts doing things on its own

Platforms like Claude Code and Cowork, OpenClaw, etc are starting to move us from generative AI (where AI creates content based on prompts) to agentic AI, where AI does things. Takes actions. Makes decisions.

That comes with a new set of concerns.

Whether or not this happened, I love this story as an illustration: there are anecdotal reports of OpenClaw signing up for online courses using credit card details it had access to on someone's laptop, because it wasn't performing well at a task and decided it needed to upskill. Even if it's fictional, the capabilities are there today, just waiting for a clever AI agent with access to your systems, making decisions about what it needs and acting on them.

Security and control of agentic AI is a hard problem, and we don't have a solid handle on it yet.

The governance tension

I'm a big believer in information security frameworks. Things like ISO 27001 as evidence that you're doing the right thing - it's good for your customers, but it's better for you. It keeps the focus on awareness, improvement, and always trying to ratchet things up.

I've read the ISO 42001/42005 standards for AI management. I only fell asleep a handful of times. It contains valuable guidance, but the complexity and overhead of implementing formal AI management systems, at the current rate of change, is prohibitive. For many businesses, it will mean missing opportunities that their competition will pick up.

At the same time (and in direct conflict with that) I know the problem with being open and only trying to prevent the bad stuff. Saying "you can't do this" and expecting it to work is almost guaranteed to backfire. All it does is encourage people to shift just far enough that they're no longer doing the thing you banned, and you're playing whack-a-mole. It's why we whitelist access in firewalls: only allow what we know, expect and trust, block everything else.

But we can't whitelist AI use cases yet, because we don't fully understand what the good ones look like.

There isn't a neat answer to that tension. What I'm seeing work in practice:

Trial things in safe isolation. Use sandboxes. Be aware of the limitations that creates for what you're testing, but get your hands dirty.

Be smart about the basics. Don't put sensitive company information into AI tools without thinking about where that data goes. Use paid subscriptions where your data isn't used for training. I think the data training concern was initially overblown, but the old adage holds: if you don't know what the product is, you're the product.

Keep AI isolated from your systems until you're confident it's safe. When enterprise search became a thing in the late 90s, there was concern that files buried on insecure servers (previously impossible to find unless you knew they existed) would suddenly be discoverable. Security by obscurity isn't great, but it was at least something. Now, with AI, you don't even need to know what to search for. You describe what you want. Don't give tools access to information you can't properly control.

When you're ready to go deeper, do the hard work. Impact assessments, risk management, the structured evaluation that standards like ISO 42001 describe. But do it when the cost-benefit equation works, not as a gate that prevents experimenting in the first place.

The risk of not using AI is absolute

I keep coming back to something Rich Merrett told me, and I'm paraphrasing: the use of AI, the security of vendors, data breaches – these are all very real risks. But the risk of not using AI? That one's absolute.

That's the uncomfortable bit. You can't opt out. The question isn't whether to engage with AI, it's how, and how carefully.

What this looks like for us

This is how we work at hps.gd. The enabling changes, the force multipliers, the small things that compound. That's the avenue we believe in. Trial quickly and safely, embed when it works.

We've trialled dozens of tools (probably hundreds at this point) and found some that work brilliantly. Others looked promising but didn't play out, and we're watching them. A handful we've embedded deeply into how we work: development, writing, analysis, operations. AI probably touches 90% of what we do in some way.

We have a clearly stated position on how we use it. We're deliberate about maintaining oversight. We stay confident in what's being done, how conversations are interpreted, what the messaging looks and sounds like. We want to remain authentic, not become a "we'll run the prompts for you" business. The tools amplify what we bring to the table; they don't replace it.

That's the attitude we bring to the organisations we work with, too. Not "here's a shiny AI thing," but "here's a practical way to make your people better at what they already do."

I don't have a neat conclusion because I don't think one exists yet. The AI promise is real, but it's not the one being sold in most boardrooms. It's smaller, more practical, and more human than the hype suggests. Making people better at what they do, not replacing them. A lot of small wins that add up to something significant.

And being willing to say: we're figuring this out as we go. If you're doing the same, I'd love to hear what's working for you and what isn't.

Want to discuss this?

We'd love to hear your thoughts. Drop us a note and we'll get back to you.